Blog

Blistering IOPS at a sensible price

December 5th, 2014

Storage has come on leaps and bounds over the last few years, especially now with ‘all flash arrays’, virtualised storage and software-defined storage solutions being pushed. This is all fuelled by the demand for more input/output operations per second (IOPs), scalability, service automation and increasing capacity.

The All-Flash Array

‘All flash arrays’ are great ways of maximising IOPs, but as with all performance there’s a price attached and the cost per GB of storage rises significantly.

You will find all flash arrays are capable of 300,000 IOPS upwards, this can be sustained reads/writes with latency less than one millisecond. But buying in an ‘all flash array’ and putting it in your environment may not deliver to you the expected performance. You need to look at the infrastructure servicing the storage network. There is no point in putting in the best flash storage array on the market yet the supporting network bandwidth and host HBA’s are not performant enough to utilise this.

So in short, ‘all flash arrays’ can deliver outstanding performance for your business but it will require significant buy-in to upgrade storage networks and that’s before you include the cost of the array itself.

The Hybrid Array

Dot Hill has helped the SMB\SME in this area by bringing to it high-performance storage at a reasonable price. So bring forward the Dot Hill 4004 series of SAN with its real-time tiering feature. The tiering firmware is the code from the more expensive Pro 5000 series unit adapted to run on the 4004 series of SAN’s.

What this storage array achieves is that it allows us to forget about the expensive ‘all flash array’ and instead use real-time tiering to get the performance we require. Real-time tiering is a technology that monitors blocks on your disks and moves them to faster disk as and when required. The difference between Dot Hill’s solution and the competition is that it does actually move data in real time. Many other competitors will scan storage usage during set schedules such as every 4 hours and then move the data. The risk here is that you have missed the point the data was hot and required faster I/O, resulting in the user experiencing poor performance. With the Dot Hill, real-time tiering option data gets access to fast disk when it needs to, so you meet SLA’s on application performance.

Dot Hill offers a range of configurations based on budget including the size of the shelf, autonomic tiering options, controller port speeds, replication and snapshot licences. This is all in a modular format so you only buy the options you require and are not forced to purchase for example replication licences when you don’t use replication on your storage arrays.

The Business Requirement

A scenario of how this solution can be tailored is as follows:

I am an IT Manager looking to upgrade a Windows 2003 File server to Windows 2012R2 hosting 5TBs of storage and growing daily.

I also have a database server that hosts many databases for my company applications. This requires higher I/O, identified due to recent performance issues.

As always I need to do this on a tight budget but still deliver a solution that will match the scope set out by the business. Part of the budget is for storage to serve databases and file data. In order to spec a cost-effective storage option I would suggest these actions:

- Assess my backend storage network, in this case, running 8GB fibre

- Assess my host’s HBAs in this case already utilising dual 8GB fibre

- Look at storage requirements existing plus growth estimated allowing for three years

- Database peak periods can see bursting of I/O above 100,000 IOPs

- % of reads vs writes on all systems

The Solution

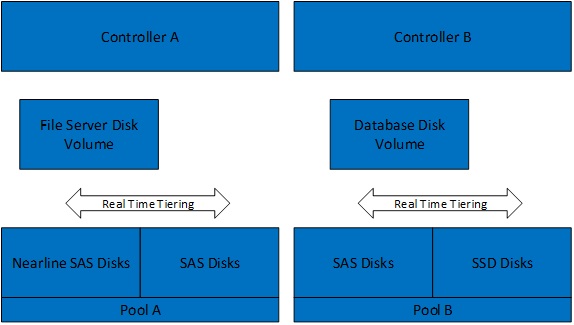

So to achieve this I would mix SAS, NL SAS and SSD disk in one array and create two-tiering pools. A tiering pool is a group of base disks tiered dependent on disk speed. This is then presented as a usable capacity for volumes to reside on and tier between as required in real time. (See Fig1)

So for the file servers in Pool A, I would create two tiers containing cheaper near-line SAS disk and faster SAS disk. The near-line SAS volume would make up roughly 85% of the required storage and the SAS volume would take the remaining 15%.

What we have achieved here is to use slower cheaper disks for file data that isn’t really accessed yet providing faster disks to cope with hot data, such as user profiles during login.

Now for the database servers in Pool B, here we would build an array of SAS disk with a header of 400GB SSD storage. Again this achieves highly performant I/O for the database server at the time of bursting the blocks would move to the SSD guaranteeing performance. Here I am achieving flash array performance but at a fraction of the cost.

Conclusion

By utilising hybrid arrays with real-time tiering we can provide I/O when required by our applications and file data.

To achieve this we tier our arrays with a higher percentage of storage being slower cheaper disk and a header tier utilising faster more expensive disk. The reason for this is that studies show that 90% of data written is never accessed again so building large flash arrays for this type of data is not cost-effective That’s not to say all flash arrays do not have their place indeed they do but it’s knowing when and where to use them.

Dot Hill’s real-time tiering is a breakthrough for those small and mid-market firms who still require those heavy-hitting applications and virtualisation platforms. They can now be supported on a storage platform that won’t break the bank.

Dan Mulliss

NEXT>> 6 current storage trends

10 signs you should switch IT support provider right now

Switching IT support provider is not a decision to be taken lightly but it is often a decision born from necessity rather than from choice. The perceived pain of changing support providers often paralyses businesses – leading them to endure the inept service until things become too costly to continue. Often, the incompetence of a […]

9 quick tips to help your business achieve GDPR compliance

On the 25th of May 2018, the General Data Protection Regulation (GDPR) officially came into force. The new regulation from the EU, which was four years in the making, aimed to standardise and strengthen data protection across the EU, giving citizens greater control over how companies use their personal data. With the maximum fine for […]

Do I need to change my business phone system?

While issues may start as minor irritations, over time, they can have a significant effect on your business operations, and this can end up reflected in your bottom line. An efficient, effective phone system is a necessity for any mid-market business, and one that can’t keep up will place a stranglehold on growth. If you’re […]